NIST vs EU AI Act: Which AI Risk Management Framework Is Right for Your Business?

Introduction

As artificial intelligence becomes deeply embedded in our business operations, healthcare systems, and daily lives, the main question is, how are businesses going to manage AI risks?. Organizations deploying AI systems today face a complex landscape of regulatory requirements, ethical considerations, and technical challenges that demand structured approaches to risk management.

Two major frameworks have emerged as frontrunners in the global effort to create responsible AI systems: the NIST AI Risk Management Framework from the United States and the EU AI Act from the European Union. While both aim to ensure AI safety and trustworthiness, they take fundamentally different approaches; one voluntary and principle-based, the other mandatory and legally binding.

For organizations operating across borders or planning to scale internationally, understanding these frameworks entails building AI systems that are resilient, trustworthy, and capable of navigating an increasingly regulated global landscape. This comprehensive guide will help you understand both frameworks, their key differences, and most importantly, which one or both, your organization should follow.

What is the NIST AI Risk Management Framework?

The National Institute of Standards and Technology AI Risk Management Framework, released in January 2023, represents the United States' approach to managing the risks associated with artificial intelligence. Unlike regulatory mandates, NIST offers a voluntary, flexible framework designed to help organizations of all sizes and sectors identify, assess, and mitigate AI-related risks.

What makes NIST unique is its technology-neutral and use-case agnostic nature. The framework doesn't prescribe specific technical solutions or rigid compliance checklists. Instead, it provides a structured methodology that organizations can adapt to their specific contexts, whether they're developing medical diagnostic AI, financial trading algorithms, or customer service chatbots.

Core Functions of NIST

The NIST framework is built around four interconnected core functions that guide organizations through the complete lifecycle of AI risk management:

1. Govern

This function establishes the foundation for responsible AI development. It involves creating organizational cultures, policies, and processes that prioritize ethical AI use. Governance includes defining roles and responsibilities, establishing accountability mechanisms, and ensuring leadership commitment to AI risk management. Organizations must cultivate a culture where risk awareness is embedded into every decision.

2. Map

The mapping function requires organizations to understand their AI context comprehensively. This means identifying all AI systems in use, documenting their purposes, understanding their potential impacts on individuals and communities, and recognizing interdependencies with other systems. Mapping also involves cataloging the types of data used, the stakeholders affected, and the specific risks associated with each AI application.

3. Measure

Measurement involves quantifying and assessing identified risks through testing, evaluation, and monitoring. Organizations need to establish metrics for AI system performance, fairness, reliability, and safety. This includes analyzing training data for bias, evaluating model outputs across diverse populations, and tracking system behavior over time. Regular measurement ensures that AI systems continue to perform as intended throughout their operational lifecycle.

4. Manage

The management function focuses on responding to identified risks through mitigation strategies, resource allocation, and continuous improvement. This includes implementing technical controls, establishing monitoring systems, creating incident response plans, and documenting decisions. Organizations must also plan for regular reviews and updates as AI systems evolve and new risks emerge.

The NIST Approach

NIST emphasizes a risk-based, stakeholder-inclusive approach that recognizes AI risk management as an ongoing process rather than a one-time compliance exercise. The framework encourages organizations to engage with diverse stakeholders including affected communities, domain experts, and end users throughout the AI lifecycle.

The framework also acknowledges that AI risks are socio-technical in nature, meaning they arise from the interaction between technology, people, and organizational contexts. This holistic perspective pushes organizations to consider not just technical failures but also potential societal harms, ethical concerns, and fairness issues. By remaining voluntary and adaptable, NIST allows organizations to tailor their risk management practices to their specific risk tolerance, resources, and operational environment.

What is the EU AI Act Risk Management Framework?

The European Union AI Act, provisionally agreed upon in December 2023 and formally adopted in 2024, represents the world's first comprehensive legal framework for artificial intelligence. Unlike NIST's voluntary guidelines, the EU AI Act is binding legislation that will apply to all AI systems used within the EU market, regardless of where the provider is located.

The EU AI Act takes a risk-based regulatory approach, categorizing AI systems into four risk levels: unacceptable risk (prohibited), high-risk (strictly regulated), limited risk (transparency requirements), and minimal risk (largely unregulated). This tiered system means that not all AI applications face the same regulatory burden, the level of oversight scales with the potential for harm.

Risk Categories Under the EU AI Act

Unacceptable Risk (Prohibited)

AI systems that pose clear threats to safety, livelihoods, or fundamental rights are completely banned. This includes social scoring systems by governments, real-time biometric identification in public spaces for law enforcement (with narrow exceptions), AI that exploits vulnerable groups, and systems that manipulate human behavior in harmful ways. The prohibition is absolute; these systems cannot be developed or deployed in the EU under any circumstances.

High-Risk Systems

High-risk AI systems must comply with strict requirements before they can be placed on the EU market. These include AI used in critical infrastructure, educational assessment, employment decisions, essential services (like credit scoring), law enforcement, migration management, and administration of justice. Providers of high-risk systems must establish quality management systems, maintain detailed documentation, ensure data governance standards, provide transparency to users, implement human oversight mechanisms, and achieve appropriate levels of accuracy, robustness, and cybersecurity.

Limited Risk

These systems primarily face transparency obligations. For example, users must be informed when they're interacting with AI systems like chatbots, when AI is used to generate or manipulate content (deepfakes), or when biometric categorization or emotion recognition systems are deployed. The key requirement is disclosure, people have the right to know when AI is involved in their interactions.

Minimal Risk

Most AI applications fall into this category, including spam filters, AI-enabled video games, and inventory management systems. These face no additional regulatory requirements beyond existing laws, allowing innovation to proceed largely unimpeded for low-risk applications.

Compliance Requirements

For high-risk AI systems, the EU AI Act mandates specific technical and organizational measures. Providers must conduct conformity assessments, register their systems in an EU-wide database, implement post-market monitoring systems, and report serious incidents. The regulation also establishes significant penalties for non-compliance, with fines reaching up to 35 million euros or 7% of global annual turnover, whichever is higher.

The Act also introduces specific rules for general-purpose AI models, particularly those with systemic risks. Providers of powerful foundation models must evaluate and mitigate systemic risks, ensure adequate cybersecurity protections, report serious incidents to the European Commission, and maintain energy-efficient development practices. This addresses concerns about large language models and other powerful AI systems that can be adapted for numerous downstream applications.

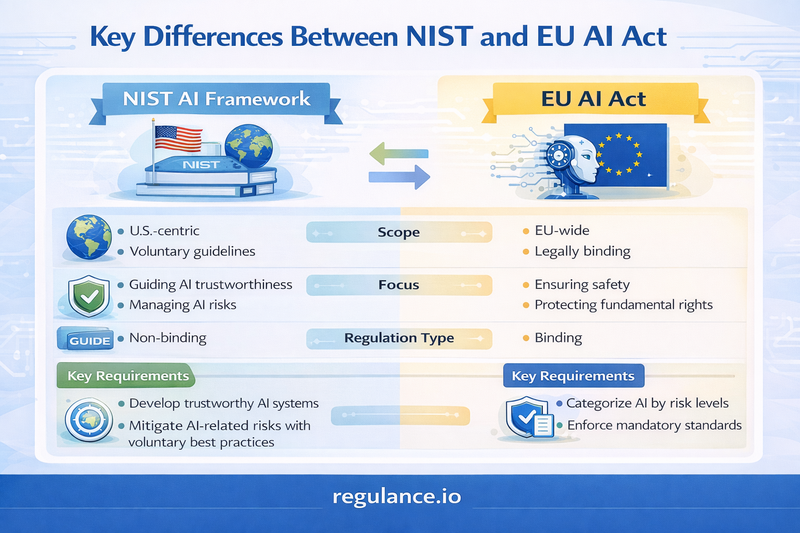

Key Differences Between NIST and EU AI Act

While both frameworks aim to promote trustworthy AI, they differ fundamentally in their nature, scope, and implementation. Understanding these differences is crucial for organizations deciding which framework to follow.

Legal Nature and Enforcement

NIST is a voluntary framework with no legal enforcement mechanism. Organizations adopt it because they recognize its value for risk management, want to demonstrate responsible AI practices, or need to meet industry expectations. There are no penalties for not following NIST, though government contractors and entities in regulated industries may face indirect pressure to adopt it.

EU AI Act is mandatory legislation with serious enforcement consequences. Organizations placing AI systems on the EU market must comply or face substantial fines, product recalls, and potential market bans. The regulation creates legal obligations that can be enforced through national authorities and EU institutions.

Scope and Applicability

NIST applies broadly to any organization developing or deploying AI, regardless of location or sector. Its technology-neutral approach means it can be used for any AI system, from simple automated decision tools to complex neural networks. The framework doesn't differentiate between risk levels in its structure; organizations determine their own risk assessments.

EU AI Act has jurisdictional and use-case specific scope. It applies to providers placing AI systems on the EU market and users located within the EU. The regulation explicitly categorizes different types of AI applications by risk level, with requirements varying dramatically based on this classification. This means two AI systems can face vastly different regulatory burdens under the EU AI Act.

Implementation Approach

NIST offers flexibility in implementation. Organizations can adopt the entire framework or select specific functions relevant to their needs. The framework provides guidance rather than requirements, allowing companies to interpret and apply principles based on their context. This adaptability makes NIST suitable for organizations at different maturity levels.

EU AI Act prescribes specific requirements that must be met. For high-risk systems, there's little room for interpretation; providers must implement technical documentation, risk management systems, data governance processes, and conformity assessments as specified. While some flexibility exists in how these requirements are achieved, the outcomes are clearly defined and auditable.

Focus and Philosophy

NIST emphasizes organizational culture, continuous improvement, and stakeholder engagement. The framework treats risk management as an ongoing process that evolves with technology and organizational learning. It encourages organizations to build internal capabilities and develop mature AI governance practices over time.

EU AI Act focuses on protecting fundamental rights, safety, and establishing market rules. The regulation sets minimum standards that all compliant AI systems must meet before deployment. Its philosophy centers on preventing harm through ex-ante regulation rather than relying solely on organizational self-governance.

Documentation and Transparency

NIST recommends documentation as a best practice for transparency and accountability but doesn't mandate specific formats or content. Organizations decide what to document based on their risk assessments and stakeholder needs.

EU AI Act requires extensive, standardized documentation for high-risk systems. This includes technical documentation, conformity declarations, instructions for use, and registration in EU databases. The documentation must be maintained and updated throughout the system's lifecycle, and must be available for regulatory inspection.

When Do You Use Each Framework or Both?

When to Follow NIST

Organizations should prioritize the NIST framework when they operate primarily in the United States or other markets without mandatory AI regulations. NIST is particularly valuable for companies in their early stages of AI adoption who need structured guidance to build responsible AI practices. Startups and small businesses benefit from NIST's flexibility, as they can scale their risk management efforts as they grow.

NIST also serves organizations that want to establish comprehensive AI governance beyond mere compliance. If your goal is to build organizational capabilities, foster a culture of responsible innovation, or establish industry leadership in AI ethics, NIST provides the framework to achieve these objectives. Government contractors and entities working with federal agencies may find NIST adoption increasingly expected, even if not legally required.

When to Follow the EU AI Act

Compliance with the EU AI Act is non-negotiable for any organization that places AI systems on the EU market or has users within the European Union. This includes EU-based companies, international corporations selling in Europe, and even non-EU entities whose AI systems are used by EU residents. If your AI system falls into the high-risk category under EU definitions, you must comply regardless of your location.

Organizations planning to expand into European markets should begin EU AI Act compliance early. The regulation's requirements for technical documentation, conformity assessments, and quality management systems take significant time to implement. Waiting until you're ready to enter the EU market can delay your expansion by months or even years.

When to Use Both Frameworks

Many organizations will benefit from implementing both frameworks in a complementary manner. Global companies operating in both the US and EU markets need to satisfy EU legal requirements while building the organizational capabilities that NIST promotes. Using NIST as a foundation for AI governance and then layering EU AI Act compliance requirements on top creates a robust, comprehensive approach.

The combination is particularly powerful because NIST's "Govern" and "Map" functions help organizations build the infrastructure needed to meet EU AI Act requirements. For example, NIST's emphasis on stakeholder engagement and context mapping prepares organizations to conduct the impact assessments required under EU regulations. Similarly, NIST's measurement and management functions align well with the EU's requirements for ongoing monitoring and incident reporting.

Organizations pursuing best-in-class AI risk management should consider both frameworks regardless of legal requirements. NIST provides the cultural and procedural foundation, while the EU AI Act offers concrete, enforceable standards. Together, they create a comprehensive risk management system that not only satisfies regulators but also builds stakeholder trust and reduces long-term business risks.

Challenges in Combining Both Frameworks

While combining NIST and the EU AI Act offers comprehensive coverage, organizations face several practical challenges in harmonizing these different approaches.

Resource Intensity

Implementing both frameworks simultaneously requires substantial investment in personnel, technology, and processes. Organizations need experts who understand both frameworks, can map requirements between them, and can design systems that satisfy both. Small and medium enterprises may struggle with the resource burden, particularly when they lack dedicated compliance teams or AI governance specialists.

Different Conceptual Approaches

NIST's continuous improvement philosophy can sometimes clash with the EU AI Act's compliance-oriented mindset. NIST encourages experimentation and iterative learning, while the EU regulation demands that certain standards be met before market entry. Balancing innovation velocity with regulatory compliance requires careful planning and potentially separate tracks for different AI initiatives.

Documentation Overhead

The EU AI Act's documentation requirements are extensive and specific, while NIST's documentation guidance is more flexible. Organizations following both frameworks may find themselves maintaining multiple documentation systems—one flexible for internal use and continuous improvement, another rigid for regulatory compliance. This duplication creates maintenance challenges and increases the risk of inconsistencies.

Evolving Standards

Both frameworks continue to evolve as AI technology advances and regulatory understanding deepens. The EU AI Act will be supplemented with delegated acts and harmonized standards, while NIST may release sector-specific guidance or updated versions. Organizations must stay current with both sets of changes, anticipate future requirements, and build adaptable systems that can accommodate regulatory evolution.

Organizational Silos

In large organizations, NIST implementation might fall to risk management or AI development teams, while EU AI Act compliance becomes a legal or regulatory affairs responsibility. This separation can lead to duplicated efforts, misaligned priorities, and gaps in coverage. Breaking down these silos requires strong governance structures and cross-functional collaboration that many organizations find challenging to establish.

Frequently Asked Questions

Is NIST legally required in the United States?

No, the NIST AI Risk Management Framework is voluntary for private sector organizations. However, federal agencies and government contractors may be required or strongly encouraged to follow NIST guidelines. Some states and industries are also beginning to reference NIST in their own regulations, making it increasingly important even without federal mandates.

Does following NIST guarantee EU AI Act compliance?

No, NIST alone does not ensure EU AI Act compliance. While NIST provides an excellent foundation for AI risk management, the EU AI Act has specific legal requirements, documentation standards, and conformity assessment procedures that must be explicitly addressed. Organizations need to supplement NIST with EU-specific compliance measures.

What happens if I violate the EU AI Act?

Violations can result in administrative fines up to 35 million euros or 7% of global annual turnover, whichever is higher. Penalties vary based on the severity and nature of the violation. Organizations may also face product recalls, market bans, and reputational damage. Repeat violations or egregious conduct can lead to more severe consequences.

Can small businesses implement these frameworks?

Yes, though the approach differs by framework. NIST is highly scalable, small businesses can implement core principles appropriate to their size and gradually expand their practices. The EU AI Act applies regardless of company size, but only if your AI systems fall under its scope. If you're a small business with high-risk AI systems targeting the EU market, compliance is mandatory but can be achieved through third-party support and standardized solutions.

How often should I review my AI risk management practices?

NIST recommends continuous monitoring and regular reviews, with many organizations conducting formal reviews quarterly or semi-annually. The EU AI Act requires ongoing monitoring throughout the AI system's lifecycle and mandates incident reporting. Both frameworks emphasize that risk management is not a one-time exercise but an ongoing commitment that must adapt to changing circumstances, technologies, and risks.

Are there other AI regulations I should be aware of?

Yes, the AI regulatory landscape is rapidly evolving globally. Canada is developing the Artificial Intelligence and Data Act, China has implemented regulations for recommendation algorithms and deepfakes, and various US states are proposing their own AI laws. Organizations should monitor regulatory developments in all markets where they operate and consider how different frameworks interact.

Conclusion

The choice between NIST and the EU AI Act pauses a question of how to strategically combine them. NIST provides the cultural foundation and flexible methodology for building mature AI risk management capabilities, while the EU AI Act establishes the legal baseline for operating in one of the world's largest markets.

Organizations that view compliance as merely checking boxes miss the deeper opportunity these frameworks present. When implemented thoughtfully, both NIST and the EU AI Act push organizations toward more responsible, transparent, and trustworthy AI systems. They force difficult conversations about values, priorities, and tradeoffs. They demand that organizations look beyond immediate technical performance to consider broader societal impacts.

The future of AI governance will likely see increasing convergence between voluntary frameworks like NIST and mandatory regulations like the EU AI Act. Organizations that invest now in robust risk management practices, whether legally required or not will find themselves better positioned for whatever regulatory changes lie ahead. They'll also build stronger relationships with customers, partners, and communities who increasingly expect AI systems to be not just powerful, but responsible.

Ultimately, the question isn't which framework to follow, but how to leverage both to build AI systems that are innovative, competitive, and worthy of trust. Organizations that embrace this challenge won't just survive the coming wave of AI regulation, they'll thrive because of it.

Navigate AI Compliance with Confidence. Regulance offers the expertise and tools you need to build trustworthy AI systems while maintaining competitive velocity.